by Aubrey Hansen

“Infinitely scalable”, “Zero transaction costs”, “Effective sharding”. Reading through the PR material provided by projects developing DAG technology, it might appear as if the second coming of our lord and savior Nakamoto is upon us. DAG ledgers are generally described as “blockchain 3.0”, solving most, if not all pesky shortcomings of the less-than-a-decade old technology.

While DAG technology is indeed a highly promising development in the realm of decentralized ledgers, the reality is, as always, slightly more complicated. To separate the wheat from the chaff of broken promises and buzzwords, we decided to dive a bit deeper to provide you with a short overview of what DAGs are, what they’re good for, and where they tend to fail.

What’s a DAG?

DAGs (or Directed Acyclic Graphs) are, just like blockchains, distributed ledgers. This means that they represent a record of events in a network (such as transactions), which is shared and agreed upon by all network participants. Blockchains achieve this universal record by grouping transactions into so-called blocks, which are then verified in (relatively slow) intervals, while cryptographically linked to the previous and following block. This creates an immutable chain of events that can’t be argued with. Hence a blockchain.

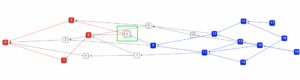

DAGs, on the other hand, create a direct link between events in the network, which allows them to perform transactions “on the fly” so to speak. This means that a node wishing to perform a transaction is required to verify the transactions of at least two other nodes, creating an ordered sequence of linked events. Hence a “Directed Graph”. This interdependence makes it so that a transaction needs to fit into the larger picture of the network’s state in order to be considered valid, creating once again a shared and agreed-upon record which all participants share.

So what?

To get a feeling of what this actually means, it is highly recommended to play around with IOTA’s Visual Tangle Simulator.

In this example, transaction number 6 is required to validate transactions #3 and #5, further validating #1 and #0 while doing so. Transaction #6 will now sit there quietly and wait for #9 to return the favor. Tinkering with transaction amount and frequency will make it apparent why this structure is nicknamed “The Tangle”.

Sharp-eyed readers might at this stage already recognize the enormous potential and failure capacity of this approach. But firsts things first, let’s start with the good stuff, and there’s plenty of it.

Scalability

Traditional blockchains have two attributes that make the network less efficient as it grows. The first has to do with how blocks are mined, or validated. The second concerns the way in which the record of validated blocks is stored.

In a blockchain network, miners, or validating nodes, compete to sign the next block. No matter how many nodes participate in this process, it is always only one of them which eventually seizes the opportunity to do so. Regardless of the network’s size, and of how much hashing power it accumulates, the entire thing is essentially always powered by the computation capacity of a single machine. With transaction density increasing in growing networks, this method becomes very slow, very quickly.

Secondly, the record of all past transactions (e.g the blockchain) has to be stored in its entirety on all network nodes. The Bitcoin blockchain, for example, is currently about 175 GB large. Ethereum’s smart contract graveyard has already exceeded 1 Terabyte. Multiply this by the myriads of nodes in the respective networks, and you get the picture.

DAGs present a potential solution for both of these problems.

Given the “tangled” nature of DAG’s validation process, the entire network is constantly engaged in validating transactions. Since every transaction entails the validation of two previous ones, transactions are processed faster as the network grows, instead of creating an increasingly narrow bottleneck (at least in theory, but we’ll get to that).

Maybe even more importantly, a DAG’s ledger isn’t stored in a single, ever-growing file that has to be replicated on each and every network node. Instead of smooshing all transactions together, the ledger is chopped up into chunks, or “shards”, making it so that each node only stores what’s relevant to its transaction\validation history. Eventually, all shards overlap to some extent and connect like some sort of puzzle, which, reconstructed, constitutes the ledger in its entirety.

Both aspects, “sharding” as well as built-in throughput growth, make DAGs much more scalable than blockchains, but there’s more.

Zero transaction fees?

Well, sort of.

The lack of blocks and the mining thereof bear the potential of allowing zero, or close to zero transaction costs. Since every transaction broadcasted by a given node entails the validation of at least two previous ones, transactions “pay for themselves” so to speak. There’s no need to bribe a miner to do unnecessary work just to move tokens around. This alone would be a killer feature and immensely useful for microtransactions and IoT use-cases.

However, theory and practice don’t always go hand in hand.

So here’s the catch

Remember when we said earlier that every DAG transaction “needs to fit into the larger picture of the network’s state in order to be considered valid”? So, well, that’s apparently not as airtight as advertised, especially for small networks.

Since at no stage a single miner validates everything that happened since the last block, there’s some wriggle room for shenanigans. The order in which transactions are validated might not be inline with the order in which they’ve been broadcasted, creating a situation in which an attacker could “double spend”, or pay the same dollar twice. If that happens, everyone’s in trouble.

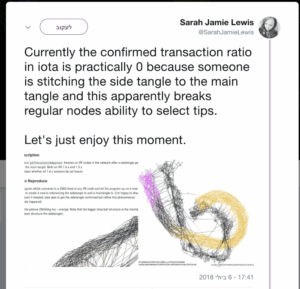

To mitigate this situation, most leading DAG projects sort of cheat. IOTA has something called a “Coordinator”, which essentially is a centralized network element within the DAG that enforces a linear order of transactions. Other projects have “Masternodes” which are either appointed or elected and kind of do the same thing.

Overall, the mesmerizing beauty of the tangle structure comes at the price of a serious dependability problem. DAGs perform outstandingly in certain cases, but become unstable, stagnant, or unreliable in others. A situation most DAG projects deal with by introducing centralized elements.

And then this happened.

Alternatives are of course worked upon. CyberVein, for example, introduces a DAG-specific consensus mechanism called Proof of Contribution. Proof of Contribution measures the amount of disk-space a node donates to store parts of the ledger’s transaction history (the “shards” from above) and compensates accordingly. Since disk space is a scarce resource, PoC serves as a barrier to create fictitious attacker nodes, making attacks costly and unfeasible, just like Bitcoin’s PoW.

This allows CyberVein to maintain a decentralized “Full Node” network which performs the same function as IOTA’s Coordinator, while remaining decentralized and outside of the company’s control. This fixes DAG’s centralization problem, but makes it so that transactions are not free any longer. However, they are still considerably cheaper than Bitcoin or Ethereum transactions.

Given all this information, what is your take? Are DAGs the ledger of the future, or just another weird animal in the blockchain zoo with limited use-cases? It’s your call.